Being an ordinary text document located in the site folder, the robot file, as users usually call it, along with a dynamic xml map, is one of the most important for search engines. Through robots.txt, search engines get information about which pages to index and which ones to skip. One mistake in the robot file can completely close the site from Yandex and Google, or give general access to sections with confidential information. When preparing an SEO audit, SEOs first analyze the robots.

If you delve into history, back in 1994, the W3C consortium made a general decision on the voluntary use of this exclusion standard for search robots, which currently number more than three hundred. From that moment on, all major systems look at the robot file when accessing the site, using it as a guide to sections and pages.

Which sites need a robots.txt file and why

When developing a site, the robot file is always added to the owner of the resource, at least you need to check that all urls are open for indexing. In addition, setting up a robots file is part of the site’s search engine optimization . Pay attention to file directives and close some sections when:

- There are technical pages, separate urls with contact forms, registrations and other modules for sending information. These pages have no value for promotion and the most correct thing is to close them from indexing;

- Search results are displayed on a separate page. Just like with forms, temporary pages with search results are best excluded from the search results;

- The sections contain pages or blocks with personal information. As a rule, this concerns customer databases stored in the site structure. Remember, it doesn’t matter if a section is displayed on the site. Information can still be indexed if not closed with the appropriate directive;

- The site has a mirror or temporary address with an early development version. The presence of mirrors is an extremely negative phenomenon, and if suddenly a website has them, then you need to close them all from indexing, otherwise the site may fall under the affiliation filter .

How to create and edit robots.txt

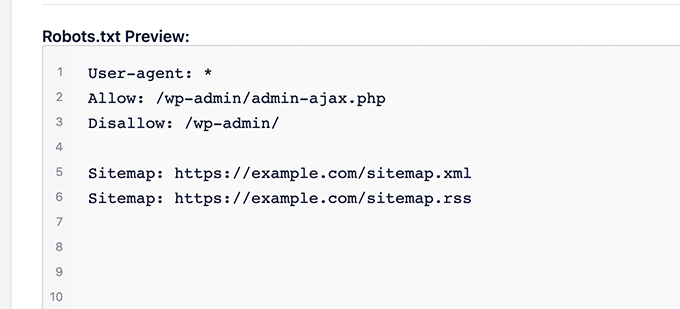

A number of control systems create a robot file automatically. You can usually make adjustments to the file through the administrative panel. In other cases, it is enough to create a standard text file in the notepad program (available on any Windows-based PC) and write the necessary commands for the search engine directly in it. An alternative option is to use numerous online services that allow you to create, fill out and download robot files in the desired format. Filled robots.txt fill in the root directory of your site. If everything is done correctly, then the description that you entered in the notepad should also appear at your site.com/robots.txt.

Editing a document can be done in two ways: create a new file on the computer in the same way and simply replace the content in the root of the site, or open the hosting file panel, find robots.txt in the root (or search) and fix it online.

How to set up robots.txt ?

Working with a robot file is both simple and complex. Adjustments are made quickly, but in the event of a mistake, the consequences can be critical. To figure out how to properly configure robots, let’s look at its syntax and rules.

directives

All robots, if we are talking about its standard version, have User-agent directives that are responsible for specifying settings for a specific search engine. A variant of both general directives and specific rules for Yandex and Google is possible.

Examples of User-agents:

User-agent: * – general rules for all PS

User-agent: Yandex – directives for the entire Yandex PS

User-agent: YandexBot is a directive for the Yandex bot

User-agent: Googlebot – general rules for all Google bots

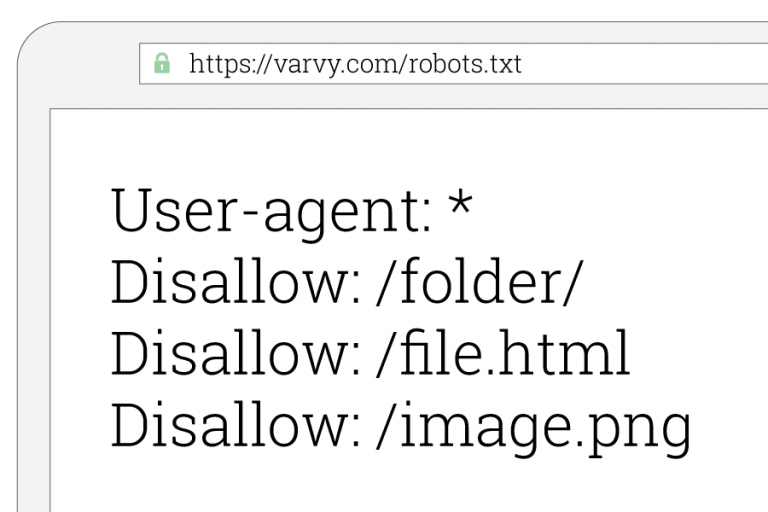

The “Disallow” directive is responsible for closing information on the site. The directive is applied both to the entire resource as a whole (to do this, just add the line disallow: /), and to specific sections. As a rule, all temporary addresses and technical domains are immediately closed from indexing. After transferring the information to the “combat” domain, the robot file is also copied, and if the “disallow: /” directive is not removed, then the domain will remain hidden from search engines.

The “Allow” directive tells the bot which page or section it needs to index. Such a command is necessary when there are exceptions to the general rule. For example, we closed an entire folder of photos from indexing, but directly the “map” file located in this folder was indicated to the search engine for indexing. In this case, we need to use the “Allow” command. Remember, when adding several rules, the bot reads the information from top to bottom, which means that the last command will be a priority.

The “Host” directive helps the search engine to index the main site mirror. This may be necessary when the site moves to the https protocol. The directive will help him not to get confused and quickly index all pages with the new protocol.

The “Sitemap” directive sends the robot the latest dynamic sitemap. This is useful when a website has multiple maps. In order not to waste the bot’s time on analyzing an outdated map, it is immediately prompted for the location of the current one.

Symbol

There are 4 main types of characters applicable to each directive – “/”, “*”, “$”, “#”:

“/” – a backslash indicates what exactly is hidden from the search engine by the disallow directive, as well as “/” is used to clarify the section in the same way as in a domain name. If you leave “/” without specifying a section, then the rule will apply to the entire site;

“*” – the star shows the sequence of characters in the document and is used after each rule;

“$” – the use of the dollar sign limits the value of the star, which is relevant for the same names of folders and files;

“#” – the pound sign is now rarely used, as search engines do not pay attention to it. Previously, through “#” webmasters left comments for colleagues.

Conclusion

Despite the fact that filling out the file incorrectly can greatly harm search engine promotion, editing and checking robots.txt is a must. Before making changes to a document, you can always check them in the webmaster panel. If everything is written correctly, the service will tell you about it, in the same way as it will highlight errors if they are found. In addition, for different CMS there are ready-made settings for robots, which at least will not harm the site and will not interfere with page indexing.